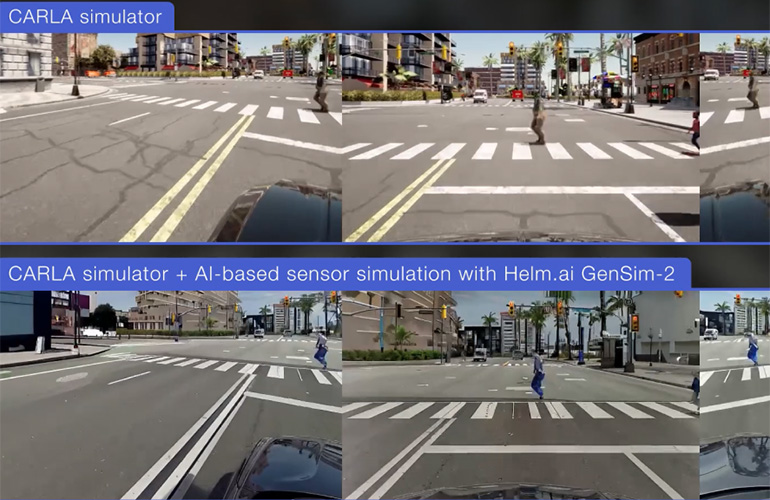

With GenSim-2, developers can modify weather and lighting conditions such as rain, fog, snow, glare, and time of day or night in video data.

| Source: Helm.aiHelm.ai last week introduced the Helm.ai Driver, a real-time deep neural network, or DNN, transformer-based path-prediction system for highway and urban Level 4 autonomous driving.

The company demonstrated the model’s capabilities in a closed-loop environment using its proprietary GenSim-2 generative AI foundation model to re-render realistic sensor data in simulation.“We’re excited to showcase real-time path prediction for urban driving with Helm.ai Driver, based on our proprietary transformer DNN architecture that requires only vision-based perception as input,” stated Vladislav Voroninski, Helm.ai’s CEO and founder.

“By training on real-world data, we developed an advanced path-prediction system which mimics the sophisticated behaviors of human drivers, learning end to end without any explicitly defined rules.”“Importantly, our urban path prediction for [SAE] L2 through L4 is compatible with our production-grade, surround-view vision perception stack,” he continued.

“By further validating Helm.ai Driver in a closed-loop simulator, and combining with our generative AI-based sensor simulation, we’re enabling safer and more scalable development of autonomous driving systems.”Founded in 2016, Helm.ai develops artificial intelligence software for advanced driver-assist systems (ADAS), autonomous vehicles, and robotics.

The company offers full-stack, real-time AI systems, including end-to-end autonomous systems, plus development and validation tools powered by its Deep Teaching methodology and generative AI.Redwood City, Calif.-based Helm.ai collaborates with global automakers on production-bound projects.

In December, it unveiled GenSim-2, its generative AI model for creating and modifying video data for autonomous driving.Helm.ai Driver learns in real timeHelm.ai said its new model predicts the path of a self-driving vehicle in real time using only camera-based perception—no HD maps, lidar, or additional sensors required.

It takes the output of Helm.ai’s production-grade perception stack as input, making it directly compatible with highly validated software.

This modular architecture enables efficient validation and greater interpretability, said the companyTrained on large-scale, real-world data using Helm.ai’s proprietary Deep Teaching methodology, the path-prediction model exhibits robust, human driver-like behaviors in complex urban driving scenarios, the company claimed.

This includes handling intersections, turns, obstacle avoidance, passing maneuvers, and response to vehicle cut-ins.

These are emergent behaviors from end-to-end learning, not explicitly programmed or tuned into the system, Helm.ai noted.To demonstrate the model’s path-prediction capabilities in a realistic, dynamic environment, Helm.ai deployed it in a closed-loop simulation using the open-source CARLA platform (see video above).

In this setting, Helm.ai Driver continuously responded to its environment, just like driving in the real world.In addition, Helm.ai said GenSim-2 re-rendered the simulated scenes to produce realistic camera outputs that closely resemble real-world visuals.Helm.ai said its foundation models for path prediction and generative sensor simulation “are key building blocks of its AI-first approach to autonomous driving.

The company plans to continue delivering models that generalize across vehicle platforms, geographies, and driving conditions.

Register now so you don't miss out!

The post Helm.ai launches AV software for up SAE L4 autonomous driving appeared first on The Robot Report.

19

19